Ed Zitron has been relentlessly pursuing the questionable economics of AI and has tentatively recognized a bombshell in his newest put up, Unique: Right here’s How A lot OpenAI Spends On Inference and Its Income Share With Microsoft. If his discovering is legitimate, giant language fashions like ChapGPT are a lot farther from ever turning into economically viable than even optimists think about. No marvel OpenAI chief Sam Altman has been speaking up a bailout.

By the use of background, over a sequence of sometimes very lengthy and relentlessly documented articles, Zitron has demonstrated (amongst many different issues) the completely monumental capital expenditures of the key AI incumbents versus comparatively skinny revenues, not to mention earnings. Zitron’s articles on the large money burn and large capital misallocation that AI represents have the work of Gary Marcus on elementary efficiency shortcomings as de facto companion items. A sampling of Marcus’ badly wanted sobriety:

5 current, ominous indicators for Generative AI

For a fast verification of how unsustainable OpenAI’s economics are, see the opening paragraph from Marcus’ November 4 article, OpenAI most likely can’t make ends meet. That’s the place you are available in:

Just a few days in the past, Sam Altman acquired significantly pissed off when Brad Gerstner had the temerity to ask how OpenAI was going to pay the $1.4 trillion in obligations he was taking up, given a mere $13 billion in income.

By the use of reference, most estimates of the dimensions of the subprime mortgage market centered on $1.3 trillion. And the AAA tranches of the bonds on mortgage swimming pools of AAA bonds had been cash good ultimately, though they did fall in worth through the disaster when that was unsure. And in foreclosures, the houses practically at all times had some liquidation worth.

Now to Zitron’s newest.

Many, significantly AI advocates within the enterprise press, contend that even when the AI behemoths go bankrupt or are in any other case duds, they are going to nonetheless depart one thing of appreciable worth, because the constructing of the railroads (which spawned many bankruptcies) or the dot-com bubble did.

However these assumptions appear to be usually primarily based on a naive view of AI economics, that having made an enormous expenditure on coaching, the continuing prices of operating queries will not be excessive and can drop to bupkis. This was the case with railroads, which had excessive mounted prices and negligible variable prices. The community results of Web companies produce related outcomes, with scale will increase producing each appreciable person advantages and decreasing per-customer prices.

That’s not the case with AI. Not solely are there very giant coaching prices, there are additionally “inference” prices. They usually aren’t simply appreciable; they’ve vastly exceeded coaching value. The viability of AI relies on inference prices dropping to a relatively low degree.

Zitron’s probably devastating discover is breadcrumbs that recommend that OpenAI’s inference prices are significantly greater than they fake. Zitron additional posits that the person costs for ChatGPT significantly subsidize the inference expenditures. As a result of the reporting on AI economics by all the large gamers is so abjectly terrible, Zitron’s allegations might properly pan out.

First, a detour to elucidate extra about inference. From Primitiva Substacks’ All You Have to Learn about Inference Price from the tip of 2024. Emphasis authentic:

Over the primary 16 months after the launch of Gpt-3.5, the market’s consideration was fixated on coaching prices, usually making headlines for his or her staggering scale. Nonetheless, following the wave of API value cuts in mid-2024, the highlight has shifted to inference prices—revealing that whereas coaching is dear, inference, much more.

Based on Barclays, coaching the GPT-4 sequence required roughly $150 million in compute assets. But, by the tip of 2024, GPT-4’s cumulative inference prices are projected to achieve $2.3 billion—15x the price of coaching.

As an apart, Gary Marcus identified in October that GPT-5 didn’t arrive in 2024 as had been predicted and has been disappointing. Again to Primitiva:

The September 2024 launch of GPT-o1 additional accelerated compute demand to shift from coaching in the direction of inference. GPT-o1 generates 50% extra tokens per immediate in comparison with GPT-4o and its enhanced reasoning capabilities outcome within the technology of inference tokens at 4x output tokens of GPT-4o.

Tokens, the smallest models of textual information processed by fashions, are central to inference compute. Usually, one phrase corresponds to about 1.4 tokens. Every token interacts with each parameter in a mannequin, requiring two floating-point operations (FLOPs) per token-parameter pair. Inference compute will be summarized as:

Whole FLOPs ≈ Variety of Tokens × Mannequin Parameters × 2 FLOPs.

Compounding this quantity enlargement, the value per token for GPT o1 is 6x that for GPT-4o’s, leading to a 30-fold enhance in complete API prices to carry out the identical process with the brand new mannequin. Analysis from Arizona State College exhibits that, in sensible functions, this value can soar to as a lot as 70x. Understandably, GPT-o1 has been out there solely to paid subscribers, with utilization capped at 50 prompts per week….

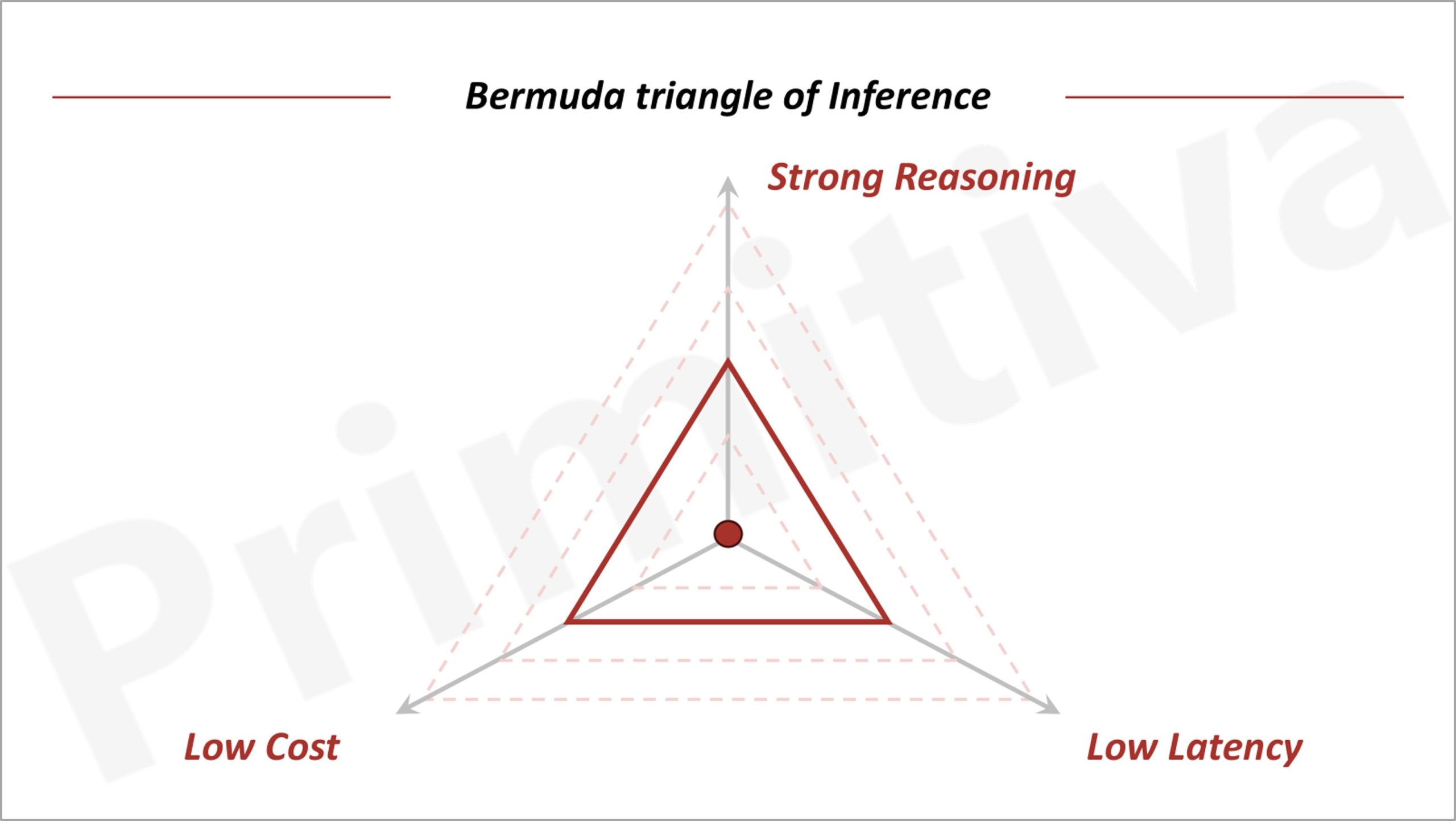

The associated fee surge of GPT-o1 highlights the trade-off between compute prices and mannequin capabilities, as theorized by the Bermuda Triangle of GenAI: the whole lot else equal, it’s unattainable to make simultaneous enhancements on inference prices, mannequin efficiency, and latency; enchancment in a single will essentially come at sacrifice of one other.

Nonetheless, developments in fashions, programs, and {hardware} can increase this “triangle,” enabling functions to decrease prices, improve capabilities, or scale back latency. Consequently, the tempo of those value reductions will in the end dictate the pace of worth creation in GenAI….

James Watt’s steam engine was such an instance. It was invented in 1776, however took 30 years of improvements, such because the double-acting design and centrifugal governor, to lift thermal effectivity from 2% to 10%—making steam engines a viable energy supply for factories…

For GenAI, inference prices are the equal barrier. In contrast to pre-generative AI software program merchandise that had been thought to be a superior enterprise mannequin than “conventional companies” largely due to its near-zero marginal value, GenAI functions must pay for GPUs for real-time compute.

Zitron is suitably cautious about his findings; maybe some heated denials from OpenAI will clear issues up. Do learn your entire put up; I’ve excised many key particulars in addition to some qualifiers to focus on the central concern. From Zitron:

Based mostly on paperwork seen by this publication, I’m able to report OpenAI’s inference spend on Microsoft Azure, along with its funds to Microsoft as a part of its 20% income share settlement, which was reported in October 2024 by The Info. In easier phrases, Microsoft receives 20% of OpenAI’s income….

These numbers on this put up differ to these which have been reported publicly. For instance, earlier studies had stated that OpenAI had spent $2.5 billion on “value of income” – which I imagine are OpenAI’s inference prices – within the first half of CY2025.

Based on the paperwork seen by this text, OpenAI spent $5.02 billion on inference alone with Microsoft Azure within the first half of Calendar Yr CY2025.

As a reminder: inference is the method by way of which a mannequin creates an output.

It is a sample that has continued by way of the tip of September. By that time in CY2025 — three months later — OpenAI had spent $8.67 billion on inference.

OpenAI’s inference prices have risen persistently during the last 18 months, too. For instance, OpenAI spent $3.76 billion on inference in CY2024, that means that OpenAI has already doubled its inference prices in CY2025 by way of September.

Based mostly on its reported revenues of $3.7 billion in CY2024 and $4.3 billion in income for the primary half of CY2025, plainly OpenAI’s inference prices simply eclipsed its revenues.

But, as talked about beforehand, I’m additionally capable of make clear OpenAI’s revenues, as these paperwork additionally reveal the quantities that Microsoft takes as a part of its 20% income share with OpenAI.

Concerningly, extrapolating OpenAI’s revenues from this income share doesn’t produce numbers that match these beforehand reported.

Based on the paperwork, Microsoft obtained $493.8 million in income share funds in CY2024 from OpenAI — implying revenues for CY2024 of no less than $2.469 billion, or round $1.23 billion lower than the $3.7 billion that has been beforehand reported.

Equally, for the primary half of CY2025, Microsoft obtained $454.7 million as a part of its income share settlement, implying OpenAI’s revenues for that six-month interval had been no less than $2.273 billion, or round $2 billion lower than the $4.3 billion beforehand reported. By way of September, Microsoft’s income share funds totalled $865.9 million, implying OpenAI’s revenues are no less than $4.329 billion.

Based on Sam Altman, OpenAI’s income is “properly extra” than $13 billion. I’m not positive the way to reconcile that assertion with the paperwork I’ve seen….

Because of the sensitivity and significance of this data, I’m taking a much more blunt method with this piece.

Based mostly on the knowledge on this piece, OpenAI’s prices and revenues are probably dramatically completely different to what we believed. The Info reported in October 2024 that OpenAI’s income might be $4 billion, and inference prices $2 billion primarily based on paperwork “which embrace monetary statements and forecasts,” and particularly added the next:

OpenAI seems to be burning far much less money than beforehand thought. The corporate burned by way of about $340 million within the first half of this 12 months, leaving it with $1 billion in money on the stability sheet earlier than the fundraising effort. However the money burn may speed up sharply within the subsequent couple of years, the paperwork recommend.

I have no idea the way to reconcile this with what I’m reporting at the moment. Within the first half of CY2024, primarily based on the knowledge within the paperwork, OpenAI’s inference prices had been $1.295 billion, and its revenues no less than $934 million.

Certainly, it’s robust to reconcile what I’m reporting with a lot of what has been reported about OpenAI’s prices and revenues.

So that is fairly a gauntlet to have thrown down. Not solely is he saying that OpenAI should have business-potential-wrecking compute prices., however his proof signifies that OpenAI has additionally been making severe misrepresentations about prices and revenues. As a result of OpenAI will not be public, OpenAI has not essentially engaged in fraud; one presumes it have correct with these to whom it has monetary reporting obligations about cash issues. But when Zitron has this proper, OpenAI has been telling howlers to different essential stakeholders.

The Monetary Instances, with whom Zitron reviewed his information earlier than publishing, is amplifying them. From How excessive are OpenAI’s compute prices? Presumably loads greater than we thought:

Pre-publication, Ed was variety sufficient to debate with us the knowledge he has seen. Listed here are the inference prices as a chart:

The article then accurately presents caveats, as did Zitron lengthy type, together with kinda-sorta feedback from Microsoft and OpenAI:

The perfect place to start is by saying what the numbers don’t present. The above is known to be for inference solely…

Extra importantly, is the info right? We confirmed Microsoft and OpenAI variations of the figures offered above, rounded to a a number of, and requested in the event that they recognised them to be broadly correct. We additionally put the info to individuals aware of the businesses and requested for any steering they might provide.

A Microsoft spokeswoman advised us: “We received’t get into specifics, however I can say the numbers aren’t fairly proper.” Requested what precisely that meant, the spokeswoman stated Microsoft wouldn’t remark and didn’t reply to our subsequent requests. An OpenAI spokesman didn’t reply to our emails apart from to say we must always ask Microsoft.

An individual aware of OpenAI stated the figures we had proven them didn’t give an entire image, however declined to say extra. Briefly, although we’ve been unable to confirm the info’s accuracy, we’ve been given no purpose to doubt it considerably both. Make of that what you’ll.

Taking the whole lot at face worth, the figures seem to point out a disconnect between what’s been reported about OpenAI’s funds and the operating prices which can be going by way of Microsoft’s books…

As Ed writes, OpenAI seems to have spent greater than $12.4bn at Azure on inference compute alone within the final seven calendar quarters. Its implied income for the interval was a minimal of $6.8bn. Even permitting for some fudging between annualised run charges and period-end totals, the obvious hole between revenues and operating prices is much more than has been reported beforehand. And, like Ed, we’re struggling to elucidate how the numbers will be thus far aside.

If the info is correct — which we will’t assure, to reiterate, however we’re penning this put up after giving each corporations each alternative to inform us that it isn’t — then it might name into query the enterprise mannequin of OpenAI and practically each different general-purpose LLM vendor. In some unspecified time in the future, going by the figures, both operating prices must collapse or buyer prices must rise dramatically. There’s no trace of both development taking maintain but.

A fast search on Twitter finds nobody but trying to put a glove on Zitron. Within the pink paper feedback part, a couple of contend that Microsoft making weak protests concerning the information means it will possibly’t be relied upon. Whereas that’s narrowly right, one would count on a extra strong debunking given the implications. And among the supportive feedback add worth, like:

Bildermann

It explains why ChatGPT has turn out to be so dumb. They’re making an attempt to scale back inference prices.His title is Robert Paulson

The very fact we’ve got to make use of a gypsy with a magic 8 ball to determine these numbers for the corporate that’s “going to revolutionize each business” is extra telling then the numbers themselvesNo F1 key

Zitron has positively been hitting that haterade, however Microsoft press saying the numbers ‘aren’t fairly proper’ makes me assume that is fairly correct.

manticore

That creaking noise is the lid being prized off the can of worms –MS had higher get on high of this. That earnings stream is extremely unlikely – becoz straight line and so on and so on – which implies that their projections are going to be badly affected and presumably there must be a Ok break up within the projection line sooner or later. MS getting holed under the waterline has real-world impacts.

Multipass

I’ve been studying Ed’s weblog for some time now and whereas he’s clearly biased in a single route, it comes throughout as infinitely extra credible than something Sam Altman has stated in years.The actual subject in my eyes is that the income numbers are so opaque and obfuscated that no one has any thought if any of this may make cash.

The truth that Microsoft and Google appear to be deliberately muddying the waters in relation to non-hosting-related LLM-driven revenues and that OpenAI and Anthropic have been disclosing mainly nothing ought to come throughout as a significant purple flag, and but no one appears to care.

Indignant Analyst nonetheless

Spoiler alert: know-how maturity is not going to assist.They’ll practice and practice and practice ever bigger fashions (parameter rely within the trillions), feeding all of it the info they’ll get or fabricate, utilizing extra highly effective supercomputers than these operating the physics simulations of the US nuclear arsenal. They’ll manually hack (which is why they want hundreds of builders) further logic across the mannequin, wonderful tuned for increasingly more eventualities.

However it’s going to all simply papering over the inescapable reality {that a} generative pre-trained transformer mannequin is intelligence as a lot as CGI is actuality: that’s precisely zero, it’s all a crude, approximate imitation devoid of the underlying nature of the factor. GPTs, for instance, can not resolve logical issues as a result of GPT fashions lack the amenities to have a conceptual illustration of an issue, or in themselves to carry onto any ‘thought’. That’s additionally why everytime you attempt to use a GPT to rigorously wonderful tune a response, it largely can not, it’s going to simply regenerate the whole lot even when explicitly instructed not to take action.

The essential query is: does it matter?

It may very properly be that the imitation recreation will attain a degree (with all that handbook hacking and testing hundreds of trajectories to pick and condense the most probably response throughout inference) the place it will likely be capable of create and preserve the phantasm of intelligence, even sentience, that lots of of hundreds of thousands will find yourself simply utilizing it anyway, no matter accuracy or substance. There are early warning of that already.

It additionally stands to purpose that the majority tech bros know this, however go together with the sport as a result of 1) it’s all about relevance and engagement, there’s a number of cash to be made even from simply imitation, and a couple of) most probably they imagine they want to participate on this section of AI growth to be in place for the following one.In any case, there isn’t any path for GPT in the direction of intelligence, it’s not a scaling or maturity subject.

Allow us to see if and when some footwear drop after this report. The naked minimal should be sharper questions at analyst calls.